Beyond the Human Eye: How AI Achieves Unbiased & Accurate Exam Marking

Published: · For teachers, coaching institutes, colleges & schools

Why “Unbiased Marking” is Hard for Humans

Teachers aim to be fair—but the reality is that manual grading is influenced by context. Even when a teacher is highly experienced and honest, the brain still makes quick judgments based on patterns and expectations.

Bias in grading is rarely intentional. It usually appears through natural human limitations such as fatigue, time pressure, and the need to make fast decisions. When hundreds of papers must be checked, small inconsistencies add up and create “grading variance”.

Common sources of grading variance

- Fatigue bias: strictness changes between the first and last scripts of a session.

- Halo effect: neat handwriting / good presentation creates a positive first impression.

- Anchoring: if the first few papers are very strong (or weak), later scoring shifts unconsciously.

- Leniency drift: strictness changes across days, evaluators, or batches.

- Time pressure: less detailed feedback when deadlines are close.

How AI “Levels the Playing Field”

AI systems don’t know a student’s reputation, roll number, or past performance—unless you explicitly provide it. In a rubric-driven workflow, the model evaluates the answer content against predefined expectations and awards marks based on scoring rules.

When implemented correctly, this means a B+ in the first batch is evaluated with the same strictness as a B+ in the last batch. That’s the heart of unbiased marking: consistent scoring rules applied consistently.

Key features of objective AI marking

- Rubric fidelity: the same marking logic is applied to every student, every time.

- Optional anonymization: student identity can be stripped before evaluation.

- Repeatability: re-runs produce consistent outcomes when rubric and inputs are unchanged.

- Question-wise scoring: marks are assigned per question/sub-question, reducing guesswork.

- Auditable feedback: comments can be aligned to missing points (“no definition”, “missing example”).

How It Works

Step 1: Scan & upload (quality matters)

Students scan and upload answer sheets. Clear scans reduceHTR errors and improve downstream evaluation quality.

Step 2: Computer vision / NLP / handwriting recognition (HTR)

The system converts handwriting into machine-readable text while preserving layout context (where answers appear on the page).

Step 3: Question mapping

Answers are mapped to the correct questions/sub-questions so scoring remains aligned to the paper pattern and total marks.

Step 4: Rubric creation & approval

A teacher-approved rubric defines what earns marks. This is where objectivity is “locked in”. You can set strictness, expected points, and partial-marking rules.

Step 5: Evaluation + partial marks

AI compares the student answer against the rubric, awards partial credit for partially correct concepts, and generates short comments linked to deductions.

Step 6: Outputs

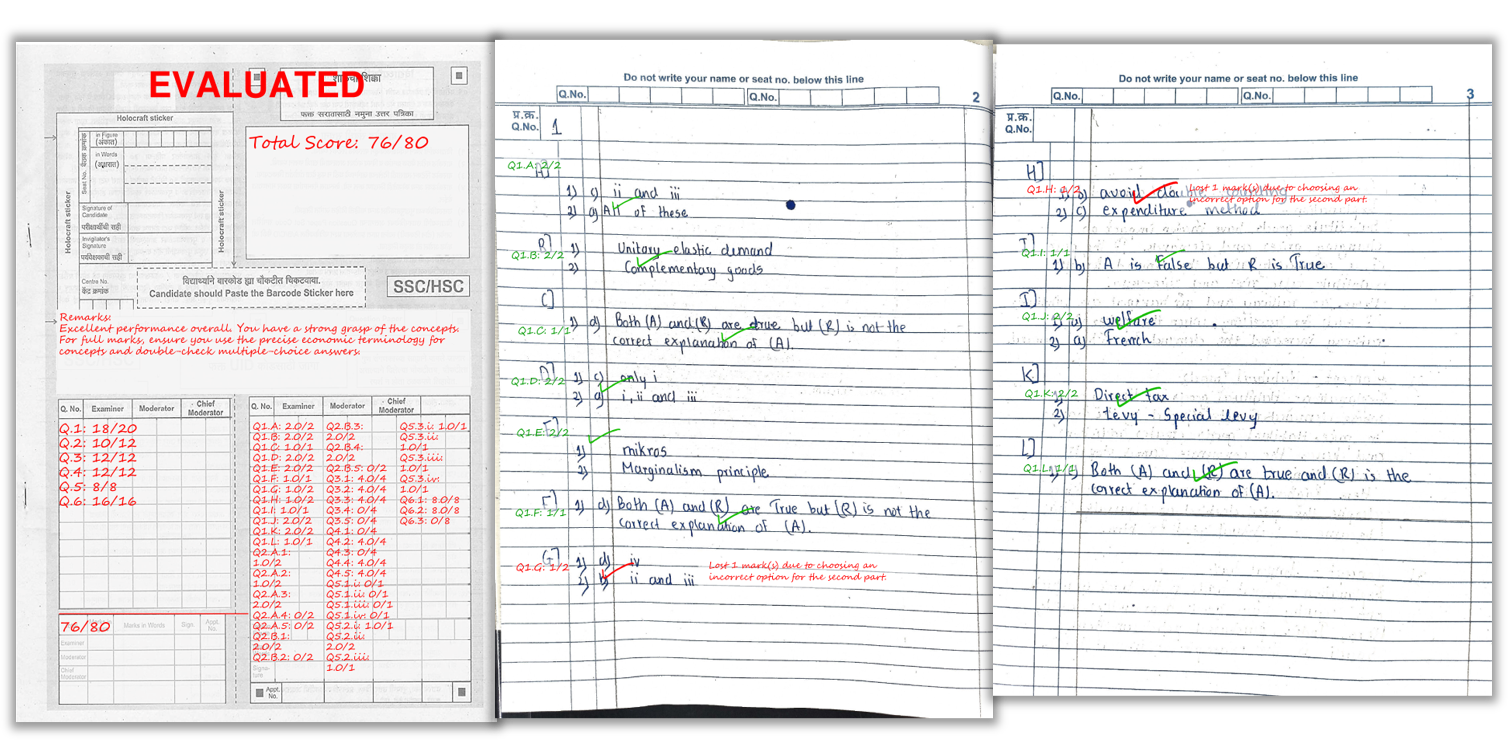

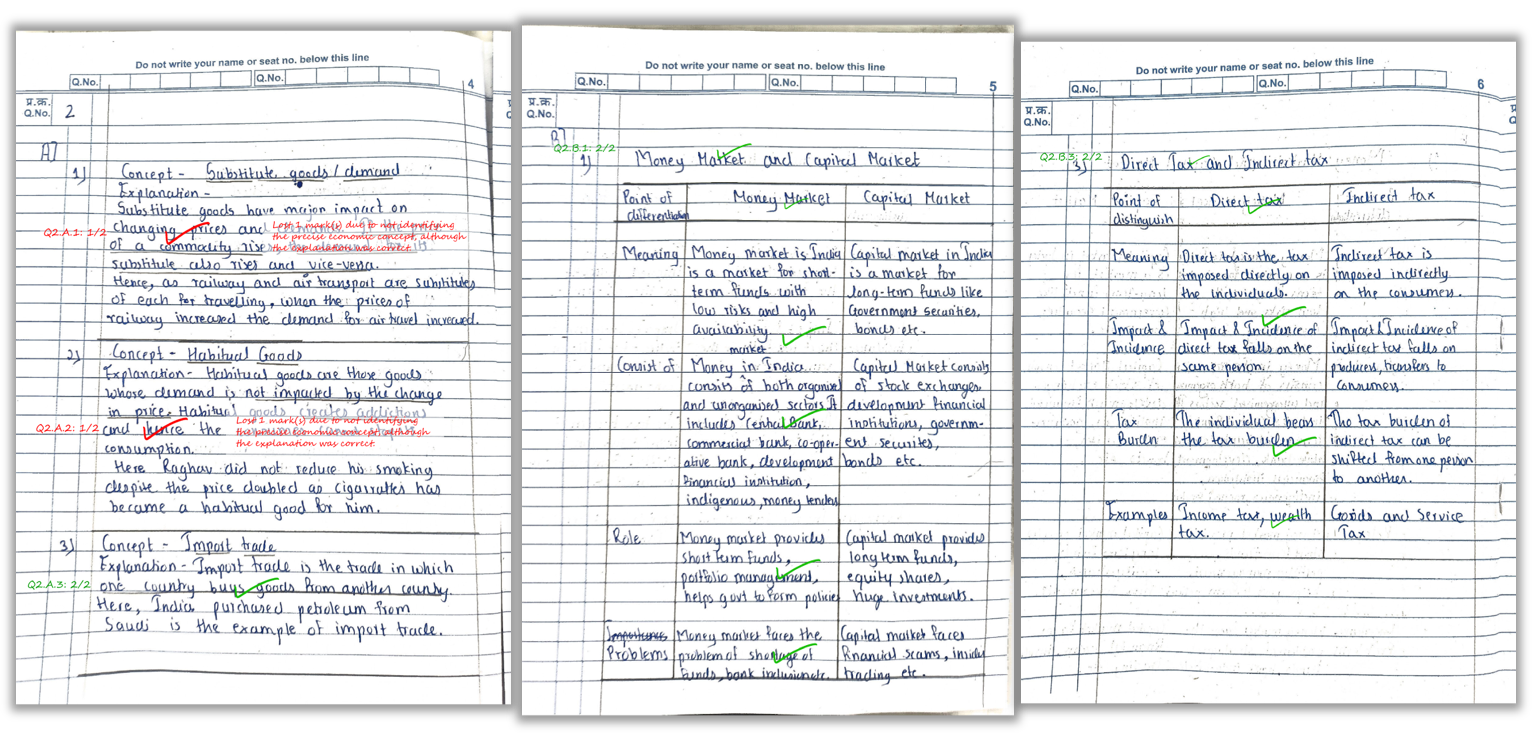

- Marked PDF with question-wise marks and remarks on the answer sheet

- Excel summary with total score + overall feedback per student

- Batch analytics (optional) to highlight weak questions/topics

What AI Can and Can’t Do (Honest Expectations)

AI reduces bias and inconsistency, but it is not a replacement for academic judgment in every scenario. The most reliable results come from a strong rubric and good input quality.

- Works best for: structured answers, clear handwriting, rubric-based scoring, consistent patterns.

- Needs care for: very creative responses, extremely messy handwriting, ambiguous questions.

Scanning Tips Checklist (improves fairness + accuracy)

- Bright lighting: avoid shadows and glare

- Flat pages: no curvature near binding

- Full page visible: don’t cut margins/corners

- Correct order: scan pages sequentially

- Legibility: encourage spacing and clean headings

See how marks and remarks appear directly on the student’s answer sheet.

FAQ

1) Does AI remove bias completely?

AI reduces human bias by applying the same rubric consistently. However, the rubric quality and scan quality still matter.

2) Can AI mark subjective answers fairly?

Yes, when evaluation is rubric-based with clear expected points and partial marking rules.

3) How does AI handle neat vs messy handwriting?

Neat handwriting and clean scans improve OCR/HTR quality. Very unclear writing can reduce accuracy—just like manual checking.

4) What outputs do teachers receive?

Marked PDFs with question-wise scores and remarks, plus an Excel summary with totals and feedback per student.

5) Can institutions anonymize students?

Yes. Many workflows strip student identity before evaluation so scoring remains purely content-based.

Related Reading

Ready to automate your evaluation?

Explore Key Features, see Pricing, or Sign Up and start with free credits.