What Makes Competitive Exam Answer Sheets Hard to Check

Competitive exams like UPSC Mains, CA/CS/CMA, and JEE often involve long answers, structured reasoning, calculations, diagrams, and multiple valid ways to reach the correct conclusion. Unlike objective tests, evaluation must consider:

- Step-wise correctness: method matters, not just the final line

- Concept coverage: key points must be present

- Partial marks: for partially correct reasoning or steps

- Answer structure: especially for UPSC and CA descriptive formats

For coaching institutes running frequent test series — JEE, NEET, CA Foundation, CS Executive, CMA Inter — the volume problem is acute. A single test for 100 students can generate 600–800 pages of answer sheets. Manual checking at that volume takes days and produces inconsistent results.

How AI Checks Competitive Exam Answer Sheets

A good AI checking system combines handwriting recognition (OCR/HTR), question mapping, and semantic evaluation. Instead of searching for exact wording, it checks meaning, logic, and coverage against a teacher-approved rubric.

Semantic evaluation — meaning-first scoring

Semantic evaluation means the model compares what the student is saying with what the rubric expects — even if wording differs. This matters for competitive exams because students may use different examples, write in their own style, present steps in a different order, or reach the correct conclusion through an alternative valid approach. All of these can earn full marks when the rubric is designed to allow it.

The Full Checking Pipeline

Step 1 — Scan and upload

Students scan answer sheets using the E-Valuate mobile app or a flatbed scanner. Scan quality directly affects accuracy: clear, flat, well-lit pages produce the best results.

Step 2 — OCR/HTR reads handwriting

The system extracts readable text from handwriting and preserves layout context — identifying where each answer begins and ends, even across long multi-page scripts.

Step 3 — Question mapping

Competitive exam answers can span multiple pages. Mapping ensures each segment is evaluated under the correct question and sub-question. Without mapping, step-wise and partial marks become unreliable.

Step 4 — Rubric creation and approval

The teacher or coordinator approves a rubric defining: key points or steps expected per question, marks per point or step, partial marking rules, and strictness level. For CA/CS numericals this might be "2 marks for correct formula, 2 marks for substitution, 1 mark for final answer." For UPSC answers it might be "1 mark each for any 5 of the following 8 arguments."

Step 5 — Step-wise marking and partial marks

AI awards marks for correct steps and generates short remarks for deductions: "missing assumption," "step skipped," "definition incomplete," "no diagram," "calculation error in step 3." This level of feedback is impossible at scale manually.

Step 6 — Output

- Marked PDF with question-wise marks and remarks on the answer sheet

- Excel summary with total marks and feedback per student

- Optional batch analytics showing weak questions and topics

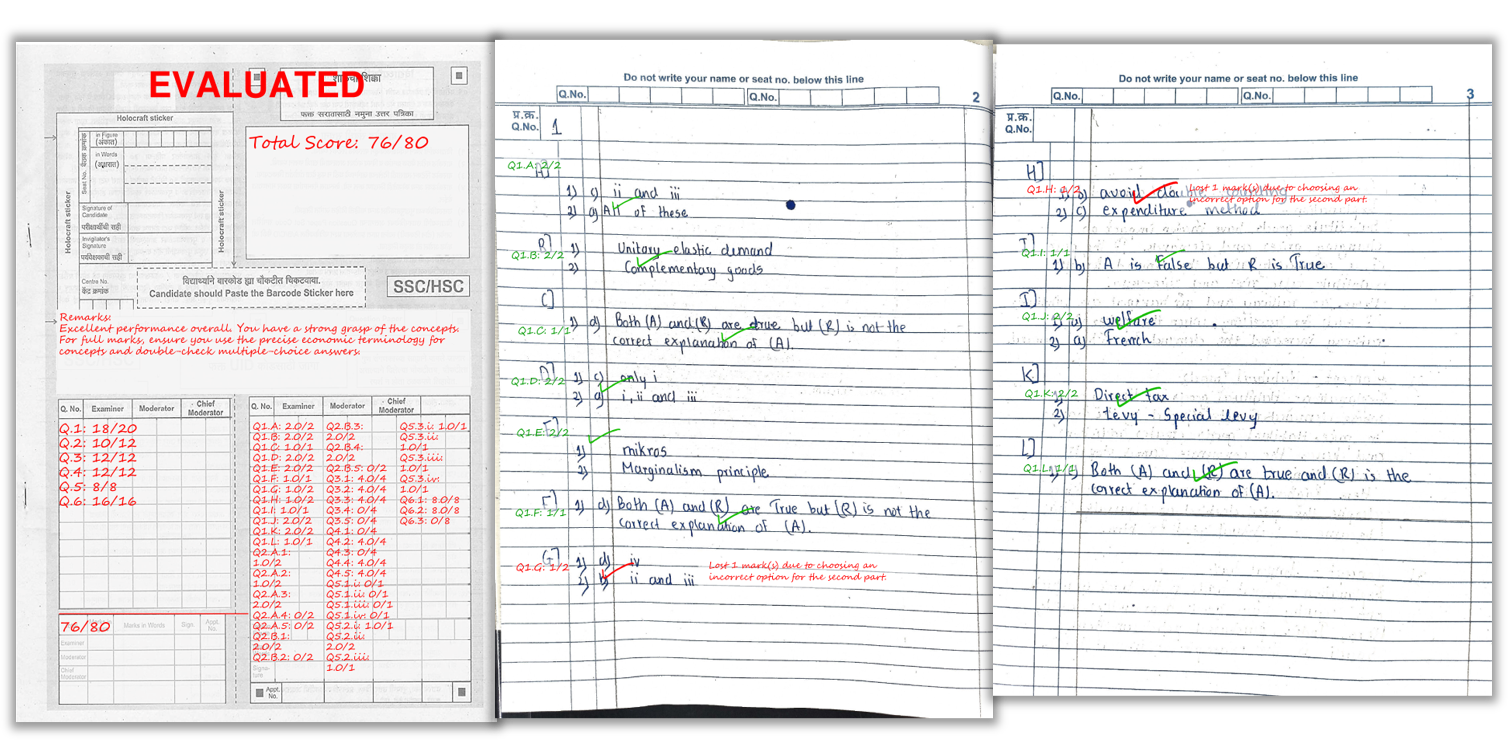

A real example of how marks and remarks appear on a student’s answer sheet after AI checking.

Where AI Checking Works Best — and Where It Needs Care

| Exam Type | AI Suitability | Notes |

|---|---|---|

| JEE / NEET mock tests | Excellent | High volume, rubric-defined steps, fast feedback needed |

| CA / CS / CMA internals | Excellent | Structured formats, concept-coverage checking |

| UPSC Mains practice | Good | Needs well-designed rubric for argument coverage |

| Open-ended essays (no model answer) | Needs care | Rubric must define expectations explicitly |

| Very messy handwriting / poor scans | Reduced accuracy | Same issue affects manual checking |

Scanning Tips for Competitive Exam Scripts

- Bright, even lighting: avoid shadows across the text

- Flat pages: reduce curvature near the binding

- Full page in frame: all four corners visible

- Scan sequentially: page order helps question mapping

- Dark ink: faint pencil or light ink reduces OCR quality

Frequently Asked Questions

Can AI check UPSC Mains answer writing for mock tests?

Yes — when the rubric defines key points, structure expectations, and partial marking rules, AI can evaluate UPSC-style long answers reliably. It checks whether key arguments and examples are present and awards marks accordingly.

Can AI award step-wise marks for JEE and CA numerical problems?

Yes, when the rubric splits marks across steps — formula selection, substitution, calculation, and final answer. AI awards marks at each correct step, even if the final answer has an arithmetic error.

Will AI penalise students who use a different but valid approach?

A semantic evaluation system checks whether the logic and key concepts are present, not whether exact wording matches. Valid alternative approaches can earn full marks when the rubric allows for concept equivalence.

Is AI paper checking useful for CA/CS/CMA descriptive answers?

Yes. Rubric-based marking checks concept coverage and awards partial marks for partially correct answers — which is standard in professional exam formats.

How fast are results for a coaching institute test series batch?

Smaller batches of 20–30 students complete within an hour. Batches of 100–150 students typically take 3 to 5 hours — far faster than the 2 to 3 day manual turnaround most coaching institutes currently manage.

What outputs does a coaching institute receive after AI checking?

Marked PDFs for each student — with per-question scores and remarks on the sheet — and an Excel summary with total marks and feedback for the full batch.

Ready to automate your answer sheet checking?

E-Valuate AI processes handwritten papers for schools and coaching institutes across India. Sign up free and get 3,000 credits (worth ₹3,000) included.

Start Free — Get 3,000 Credits