Why Unbiased Marking Is Hard for Human Examiners

Teachers aim to be fair — and most are. But the research on grading consistency is unambiguous: manual checking at scale produces statistically significant variance, even when the same teacher checks the same set of papers twice. This is not a failure of character. It is a structural limitation of sustained cognitive work under volume and time pressure.

In Indian schools and coaching institutes, where a single teacher might check 80 to 200 papers for a single exam, the conditions that create grading variance are almost always present.

The five main sources of grading variance

- Examiner fatigue: strictness changes measurably between the first paper and the last paper of a checking session. This is documented in grading research across countries and subjects.

- Halo effect: neat handwriting, clean presentation, and a well-organised answer sheet create a positive first impression that can inflate marks — even before the content is evaluated.

- Anchoring: if the first few papers are exceptional or very weak, the evaluator's baseline shifts unconsciously, and subsequent papers are marked relative to that anchor rather than against the rubric.

- Leniency drift: strictness tends to decrease over the course of a long checking session. Teachers become more generous as they tire.

- Time pressure: when deadlines are close, examiners give less detailed feedback and make faster (and less accurate) marking decisions.

How AI Eliminates These Sources of Variance

Rubric fidelity — the same rules, every paper

The teacher defines the marking scheme once and approves it. The AI applies exactly those rules to every single paper in the batch — paper 1 and paper 200 are marked against the identical rubric with the identical strictness level. There is no fatigue, no drift, no anchoring.

No presentation bias

AI evaluates text content, not handwriting aesthetics. A neatly presented paper with an incorrect answer earns the same score as a messy paper with the same incorrect answer. Marks come from concept coverage and logical correctness — not penmanship.

Optional anonymisation

Student names and roll numbers can be stripped before evaluation. The AI has no access to student identity, prior performance, or reputation — scoring is based purely on what is written on the page.

Repeatability

Running the same papers through the same rubric twice produces the same marks. This auditability — impossible with manual checking — creates a defensible record for any mark disputes.

The Full Checking Pipeline

Step 1 — Scan and upload

Students scan and submit answer sheets via the E-Valuate app. Clear scans reduce OCR/HTR errors and improve downstream evaluation quality.

Step 2 — OCR/HTR reads handwriting

The system converts handwriting into readable text while preserving layout context — identifying question numbers, answer regions, and page structure.

Step 3 — Question mapping

Each answer is matched to its correct question, enabling reliable per-question marks and partial credit.

Step 4 — Rubric approval

The teacher reviews and approves the marking rubric — adjusting expected points, marks per question, strictness, and partial credit rules. This is where fairness is designed into the process.

Step 5 — Evaluation and output

- Marked PDF with per-question marks and remarks on the answer sheet

- Excel summary with total marks and feedback per student

- Batch analytics (optional) showing scoring distribution and weak questions

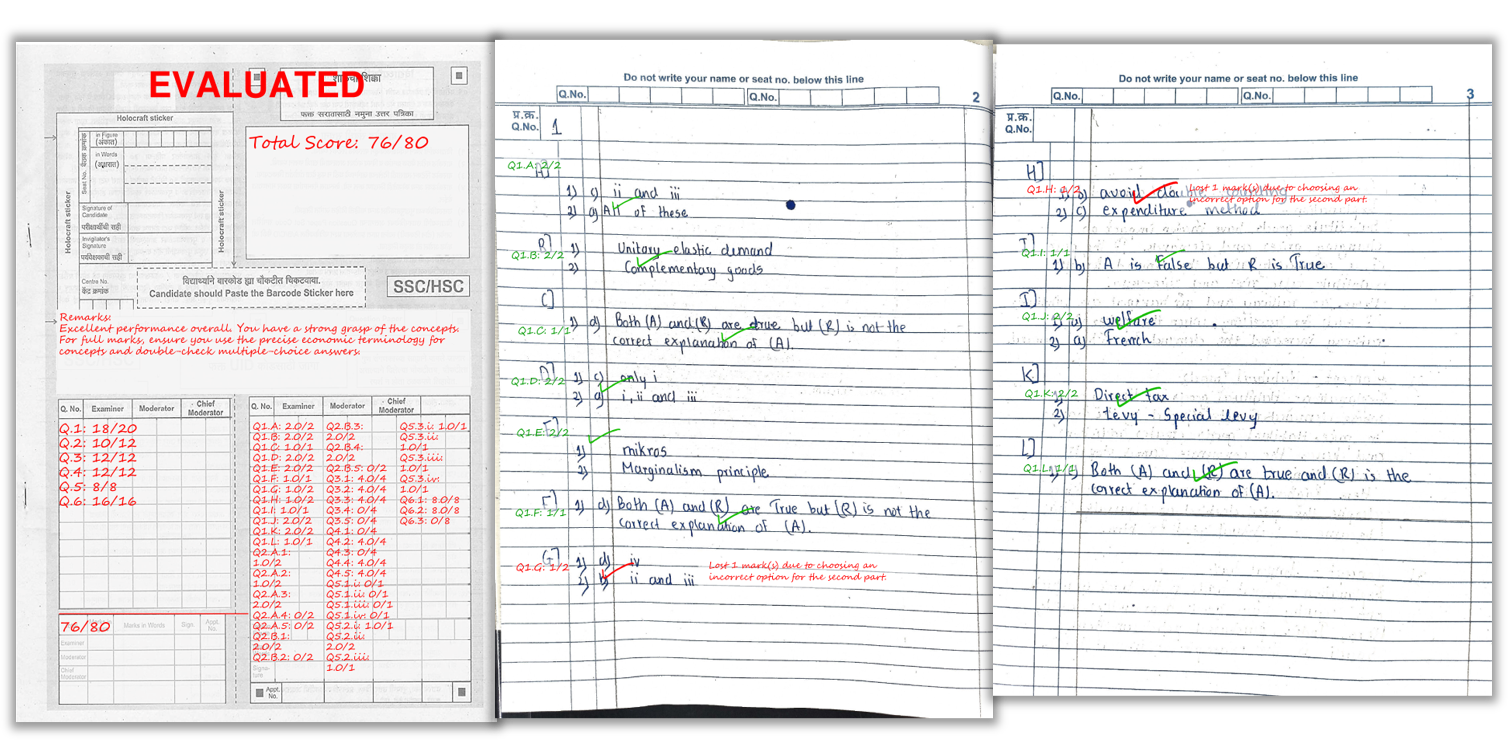

A real example of how marks and remarks appear on a student’s answer sheet after AI checking.

What AI Can and Cannot Do — Honest Expectations

AI reduces the main sources of human grading variance but is not a perfect system. The most reliable results come from a strong teacher-approved rubric and good scan quality. Two honest limitations to be aware of:

- Rubric bias: if the rubric itself is too narrow or misses valid approaches, AI will consistently apply that narrow rubric. The quality of the marking scheme still depends on the teacher.

- Scan quality: very poor scans reduce OCR accuracy, which can affect marks. This is manageable with a consistent scanning routine but should be addressed before the first exam.

Within those constraints, AI marking is substantially more consistent than manual checking at the volumes typical of Indian schools and coaching institutes.

Scanning Tips for Fair and Consistent Checking

- Bright, even lighting: avoid shadows across the writing

- Flat pages: no curvature near the binding

- Full page visible: all four corners in frame

- Correct page order: scan sequentially

- Encourage legibility: students who leave space between answers and write clearly produce better OCR results

Frequently Asked Questions

Does AI remove grading bias completely?

AI significantly reduces the main sources of human grading bias — examiner fatigue, halo effect, anchoring, and leniency drift — by applying the same rubric consistently. However, bias in the rubric itself can still exist. AI enforces the teacher's decisions consistently but does not independently eliminate subjective choices made in the rubric design.

Can AI mark subjective answers fairly?

Yes, when evaluation is rubric-based with clearly defined expected points and partial marking rules. The rubric defines what fairness looks like — AI applies it without variation.

How does AI handle neat versus messy handwriting?

AI does not award extra marks for neat presentation. Only content and concept coverage affect the score. Neat handwriting and clean scans improve OCR accuracy, but they do not inflate marks the way they can in manual checking.

What outputs do teachers receive?

Marked PDFs with per-question scores and remarks on the answer sheet, plus an Excel summary with total marks and overall feedback per student.

Can institutions anonymise student identity before AI checking?

Yes. Student names and roll numbers can be stripped before evaluation so scoring is based purely on content. E-Valuate supports anonymised workflows.

Is AI-checked marking accepted for official board exams?

Currently, AI checking is used for internal assessments, unit tests, prelims, and coaching institute mock tests — not official board evaluations. It is an established tool for the high-volume practice checking that teachers do throughout the academic year.

Ready to automate your answer sheet checking?

E-Valuate AI processes handwritten papers for schools and coaching institutes across India. Sign up free and get 3,000 credits (worth ₹3,000) included.

Start Free — Get 3,000 Credits