Why Handwritten Answer Sheet Checking Is Still a Problem in 2026

India conducts more written exams than almost any country on earth. Across 1.5 million schools, 40,000+ coaching institutes, and thousands of colleges, handwritten answer sheets are the dominant exam format — not multiple choice, not digital submissions, not typed answers. Students write. Teachers check.

For a single teacher handling three sections of 40 students, one unit test produces 120 answer booklets. At 8 pages each, that is 960 pages to read, evaluate, score, annotate, and enter into a record — on top of teaching, planning, and administration. Multiply this across a 40-week academic year with unit tests, class tests, half-yearlies, and prelims, and the checking burden is enormous.

The consequences are visible everywhere: results take a week or more, detailed feedback is rationed because there is no time, consistency drops as fatigue sets in across a long checking session, and students sit the next test before they have meaningful feedback from the last one.

AI handwritten answer sheet checking addresses every one of these problems — without changing the exam format students and teachers already use.

How AI Reads and Checks Handwritten Answer Sheets

The process involves three technical layers working in sequence: handwriting recognition, layout understanding, and meaning evaluation. Together they replicate — and in many ways improve on — what a human examiner does.

Layer 1 — OCR and HTR (reading the handwriting)

Optical Character Recognition (OCR) handles printed or typed text. Handwritten Text Recognition (HTR) handles the variability of student handwriting — different styles, slant, ink pressure, letter shapes, and page conditions. Modern HTR models are trained on vast datasets of diverse handwriting and use surrounding context to resolve ambiguous characters, much like a human reader fills in a word from context.

Layer 2 — Vision-Language Models (understanding the page)

A Vision-Language Model (VLM) analyses the spatial structure of the scanned page: identifying question number headings, answer regions, underlined text, diagram areas, and where one answer ends and the next begins. This is what makes question mapping reliable — the system understands the layout, not just the text characters.

Layer 3 — NLP (evaluating meaning)

Once each answer is extracted and mapped to its question, Natural Language Processing (NLP) evaluates whether the meaning matches the rubric. For subjective answers, the AI does not require exact wording. It checks whether the student expressed the correct concept, included the required key points, and avoided factual errors. This is semantic evaluation — meaning-first, not keyword-matching.

The Complete Checking Pipeline — Step by Step

-

Teacher sets up the exam

The teacher uploads the question paper and model answers. The AI generates a draft rubric with per-question marks, expected key points, and suggested partial marking rules. The teacher reviews and approves it — this is the only step that requires teacher input before papers arrive.

-

Students scan and submit via mobile app

Students open the E-Valuate app, enter the exam code, and scan their answer sheets page by page using their phone camera. No special scanner, no printing, no physical collection. Each student gets an upload confirmation. Staff-managed scanning is also supported for situations where students do not self-scan.

-

AI reads the handwriting (OCR/HTR)

Each uploaded PDF is processed by the recognition stack. Handwriting is converted to readable text; the VLM layer identifies the page layout and locates each answer region relative to its question number.

-

Question mapping

Every text segment is matched to its correct question and sub-question — even if the student answered out of order, continued across pages, or wrote in margins. Accurate mapping is what makes per-question marks and step-wise partial credit reliable.

-

Rubric evaluation and partial marking

The AI compares each answer against the approved rubric. Marks are awarded for key points present; partial credit is given where some but not all points are covered; short remarks are generated where marks are deducted — "definition missing," "no example," "incorrect step 2," "concept correct but incomplete."

-

Output generation

A marked PDF is generated for each student — with scores and remarks on the actual answer sheet, exactly as a human examiner would annotate it. An Excel summary is compiled for the full batch. Both are available for download as soon as processing completes.

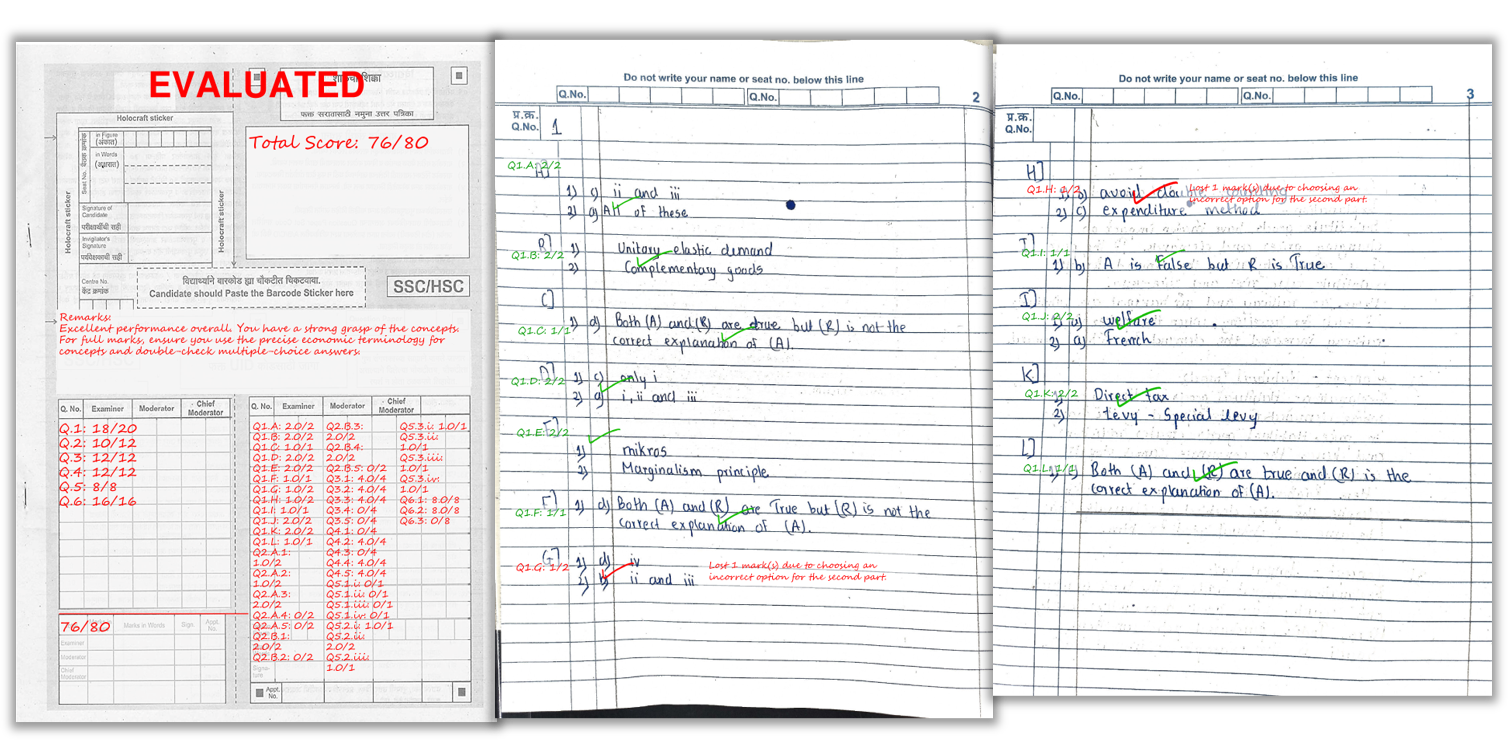

A real example of how per-question scores and remarks appear on a student’s handwritten answer sheet after AI checking.

What Teachers Receive After AI Checking

Marked PDF — one per student

Each student's own answer sheet comes back annotated: per-question scores written beside each answer, short remarks explaining deductions, and a summary score at the end. Students can see exactly where they lost marks and why — the same information they would get from a manually checked paper, delivered in hours instead of days.

Excel summary — one for the full batch

A single Excel file with every student's name, total marks, question-wise breakdown, and overall feedback. No manual data entry. Ready to share with students, parents, or administration, or to upload directly into any records system.

Batch analytics (optional)

Question-wise performance across the class — showing which questions had the lowest average scores, which topics produced the most errors, and where the class is collectively weakest. This is actionable intelligence for planning the next revision session, available the same day as the exam.

Boards, Classes, and Subjects Supported

AI answer sheet checking works for all boards and all subjects. There is no board-specific configuration — the rubric adapts to any marking scheme the teacher provides.

| Board | Classes Supported | Exam Types |

|---|---|---|

| CBSE | Class 6–12 | Unit tests, half-yearly, prelims, SA, LA formats |

| ICSE / ISC | Class 6–12 | All patterns including structured and essay |

| SSC Maharashtra | Class 5–12 | SSC, HSC, internal assessments |

| All state boards | Class 6–12 | Any board — rubric adapts to marking scheme |

| College internal | UG / PG | Internal assessments, mid-term, end-term theory |

| Coaching institutes | All levels | Mock tests, test series, prelim preparation |

Manual Checking vs AI Checking — The Full Comparison

| Factor | Manual Checking | AI Checking (E-Valuate) |

|---|---|---|

| Time for 100 papers | 2–5 days | Under 2 hours |

| Consistency | Varies — fatigue, anchoring, drift | Same rubric, every paper, no drift |

| Feedback per student | Decreases as checking session lengthens | Same level of remarks for every student |

| Partial marks | Inconsistent across teachers and sessions | Consistent — rubric defines the rules |

| Results record (Excel) | Manual data entry required | Auto-generated, zero data entry |

| Bias | Handwriting, presentation affect marks | Content-only scoring |

| Hardware needed | None | Phone camera — no scanner required |

| Cost per paper (100 students, 8 pages) | Teacher time cost | ₹1,600 total (₹2/page) |

What Makes AI Checking Accurate — and What Affects It

Two factors account for the vast majority of accuracy variation in AI handwritten checking — and both are within the school's control:

Scan quality

Clean scans produce accurate OCR. Dark, skewed, or cropped pages reduce text extraction quality, which flows downstream into marking accuracy. The solution is a consistent scanning routine briefed to students before exam day — it takes 5 minutes and prevents most accuracy issues.

- Bright, even lighting — no shadows across the writing

- Pages completely flat — no curvature near the binding

- Full page in frame — all four corners visible

- Scan sequentially — page order supports question mapping

- Dark ink — faint pencil reduces OCR quality significantly

Rubric quality

The rubric defines what the AI checks for. A rubric with clearly defined key points, explicit partial marking rules, and calibrated marks per question produces consistent, fair results. A vague rubric — "award marks for correct answer" without defining what correct means — produces variable results. Investing 10 minutes in rubric review before each exam is the single highest-return action a teacher can take.

Who Uses AI Handwritten Answer Sheet Checking

Schools (Class 6–12)

Unit tests, class tests, half-yearly exams, and prelims. The biggest benefit for schools is the ability to run more frequent practice tests without increasing teacher workload. More tests + faster feedback = better board exam performance.

Coaching institutes

Weekly or bi-weekly mock test series for JEE, NEET, CA, CS, CMA, UPSC, and board exam preparation. Coaching institutes often have the most acute volume problem — 80 to 200 students per batch, tests every week, teachers already stretched thin. AI checking is most transformative in this segment.

Colleges and universities

Internal assessments, mid-term theory papers, and end-term written examinations. College faculty handling multiple subjects across multiple divisions benefit from consistent marking and the auto-generated Excel summary for grade records.

Getting Started — What You Need

No special hardware, no IT infrastructure, no long setup process. What you need:

- Question paper + model answer: used to generate the rubric

- E-Valuate account: free signup, credits included

- Student phones: any Android or iOS device with the E-Valuate app

- 5-minute scanning briefing: tell students the scanning routine before exam day

Most schools and coaching institutes complete their first AI-checked test on the same day they sign up, often within the first week of trying the platform.

Frequently Asked Questions

How accurate is AI for checking handwritten answer sheets?

For clear handwriting and good-quality scans, AI checking accuracy is high enough for reliable marking across most subjects and exam formats. The two variables that most affect accuracy are scan quality and rubric quality — both within the school's control. When both are controlled, AI checking is more consistent than manual checking at scale because it applies the same rubric without fatigue or drift.

Which boards and classes does AI answer sheet checking support?

All Indian boards — CBSE, ICSE, SSC Maharashtra, and all state boards — across Class 6 to Class 12, and for college internal assessments. It also supports competitive exam mock tests for JEE, NEET, CA Foundation, CS, CMA, and UPSC practice.

Can AI award partial marks for handwritten answers?

Yes. When the teacher's rubric defines key points and partial marking rules, AI awards partial credit for partially correct answers. Step-wise partial marking is also supported for numericals and derivations where each step has its own mark allocation.

Does AI checking work without a special scanner?

Yes. Students submit via the E-Valuate mobile app using any phone camera. No dedicated scanner or special hardware is required. A flatbed scanner is supported for institutions that prefer staff-managed scanning, but phone scanning is sufficient for most use cases.

What outputs does a teacher receive after AI checking?

A marked PDF for each student — with per-question scores and remarks on the actual answer sheet — and an Excel summary with total marks and overall feedback for the full batch. Optional batch analytics showing question-wise performance across the class are also available.

How long does AI take to check 100 handwritten answer sheets?

AI processing for 100 papers of 6 to 8 pages takes 45 to 75 minutes. Including student scanning (15 to 25 minutes), the total from exam end to results in hand is around 60 to 90 minutes — compared to 2 to 5 days manually.

Is AI answer sheet checking suitable for subjective long-answer papers?

Yes. Rubric-based AI checking works well for subjective long-answer papers when the teacher defines expected key points and concept coverage requirements. The AI evaluates meaning — not exact wording — so students are not penalised for expressing correct answers in their own words.

What is the cost of AI answer sheet checking in India?

E-Valuate AI charges ₹2 per page with credits that never expire and no minimum commitment. For a 40-student class with 8-page answer sheets, that is ₹640 per test — less than the cost of printing the papers. No subscription, no monthly fee.

Start checking handwritten answer sheets with AI today

E-Valuate AI is built for Indian schools and coaching institutes. Sign up free and get 3,000 credits (worth ₹3,000) — enough to check your first 375 pages at no cost.

Start Free — Get 3,000 Credits